Scientific study of sound perception and audiology

Psychoacoustics

is the branch of

psychophysics

involving the scientific study of

sound

perception

and

audiology

?how the human

auditory system

perceives various sounds. More specifically, it is the branch of science studying the

psychological

responses associated with sound (including

noise

,

speech

, and

music

). Psychoacoustics is an interdisciplinary field including psychology,

acoustics

, electronic engineering, physics, biology, physiology, and computer science.

[1]

Background

[

edit

]

Hearing is not a purely mechanical phenomenon of

wave propagation

, but is also a sensory and perceptual event; in other words, when a person hears something, that something arrives at the

ear

as a mechanical sound wave traveling through the air, but within the ear it is transformed into neural

action potentials

. The outer hair cells (OHC) of a mammalian

cochlea

give rise to enhanced sensitivity and better

[

clarification needed

]

frequency resolution of the mechanical response of the cochlear partition. These nerve pulses then travel to the brain where they are perceived. Hence, in many problems in acoustics, such as for

audio processing

, it is advantageous to take into account not just the mechanics of the environment, but also the fact that both the ear and the brain are involved in a person's listening experience.

[

clarification needed

]

[

citation needed

]

The

inner ear

, for example, does significant

signal processing

in converting sound

waveforms

into neural stimuli, so certain differences between waveforms may be imperceptible.

[2]

Data compression

techniques, such as

MP3

, make use of this fact.

[3]

In addition, the ear has a nonlinear response to sounds of different intensity levels; this nonlinear response is called

loudness

.

Telephone networks

and audio

noise reduction

systems make use of this fact by nonlinearly compressing data samples before transmission and then expanding them for playback.

[4]

Another effect of the ear's nonlinear response is that sounds that are close in frequency produce phantom beat notes, or

intermodulation

distortion products.

[5]

The term

psychoacoustics

also arises in discussions about cognitive psychology and the effects that personal expectations, prejudices, and predispositions may have on listeners' relative evaluations and comparisons of sonic aesthetics and acuity and on listeners' varying determinations about the relative qualities of various musical instruments and performers. The expression that one "hears what one wants (or expects) to hear" may pertain in such discussions.

[

citation needed

]

Limits of perception

[

edit

]

An

equal-loudness contour

. Note peak sensitivity around

2?4 kHz,

in the middle of the

voice frequency band

.

An

equal-loudness contour

. Note peak sensitivity around

2?4 kHz,

in the middle of the

voice frequency band

.

The human ear can nominally hear sounds in the range

20

Hz

(0.02 kHz)

to

20,000 Hz

(20 kHz).

The upper limit tends to decrease with age; most adults are unable to hear above 16 kHz. The lowest frequency that has been identified as a musical tone is 12 Hz under ideal laboratory conditions.

[6]

Tones between 4 and 16 Hz can be perceived via the body's

sense of touch

.

Human perception of audio signal time separation has been measured to be less than 10 microseconds. This does not mean that frequencies above

100 kHz

are audible, but that time discrimination is not directly coupled with frequency range.

[7]

[8]

Frequency resolution of the ear is about 3.6 Hz within the octave of

1000?2000 Hz.

That is, changes in pitch larger than 3.6 Hz can be perceived in a clinical setting.

[6]

However, even smaller pitch differences can be perceived through other means. For example, the interference of two pitches can often be heard as a repetitive variation in the volume of the tone. This amplitude modulation occurs with a frequency equal to the difference in frequencies of the two tones and is known as

beating

.

The

semitone

scale used in Western musical notation is not a linear frequency scale but

logarithmic

. Other scales have been derived directly from experiments on human hearing perception, such as the

mel scale

and

Bark scale

(these are used in studying perception, but not usually in musical composition), and these are approximately logarithmic in frequency at the high-frequency end, but nearly linear at the low-frequency end.

The intensity range of audible sounds is enormous. Human eardrums are sensitive to variations in the sound pressure and can detect pressure changes from as small as a few

micropascals

(μPa) to greater than

100

kPa

.

For this reason,

sound pressure level

is also measured logarithmically, with all pressures referenced to

20

μPa

(or 1.97385×10

?10

atm

). The lower limit of audibility is therefore defined as

0

dB

,

but the upper limit is not as clearly defined. The upper limit is more a question of the limit where the ear will be physically harmed or with the potential to cause

noise-induced hearing loss

.

A more rigorous exploration of the lower limits of audibility determines that the minimum threshold at which a sound can be heard is frequency dependent. By measuring this minimum intensity for testing tones of various frequencies, a frequency-dependent

absolute threshold of hearing

(ATH) curve may be derived. Typically, the ear shows a peak of sensitivity (i.e., its lowest ATH) between

1?5 kHz,

though the threshold changes with age, with older ears showing decreased sensitivity above 2 kHz.

[9]

The ATH is the lowest of the

equal-loudness contours

. Equal-loudness contours indicate the sound pressure level (dB SPL), over the range of audible frequencies, that are perceived as being of equal loudness. Equal-loudness contours were first measured by Fletcher and Munson at

Bell Labs

in 1933 using pure tones reproduced via headphones, and the data they collected are called

Fletcher?Munson curves

. Because subjective loudness was difficult to measure, the Fletcher?Munson curves were averaged over many subjects.

Robinson and Dadson refined the process in 1956 to obtain a new set of equal-loudness curves for a frontal sound source measured in an

anechoic chamber

. The Robinson-Dadson curves were standardized as

ISO

226 in 1986. In 2003,

ISO 226

was revised as

equal-loudness contour

using data collected from 12 international studies.

Sound localization

[

edit

]

Sound localization

is the process of determining the location of a sound source. The brain utilizes subtle differences in loudness, tone and timing between the two ears to allow us to localize sound sources.

[10]

Localization can be described in terms of three-dimensional position: the

azimuth

or horizontal angle, the

zenith

or vertical angle, and the distance (for static sounds) or velocity (for moving sounds).

[11]

Humans, as most

four-legged animals

, are adept at detecting direction in the horizontal, but less so in the vertical directions due to the ears being placed symmetrically. Some species of

owls

have their ears placed asymmetrically and can detect sound in all three planes, an adaption to hunt small mammals in the dark.

[12]

Masking effects

[

edit

]

Audio masking graph

Audio masking graph

Suppose a listener can hear a given acoustical signal under silent conditions. When a signal is playing while another sound is being played (a masker), the signal has to be stronger for the listener to hear it. The masker does not need to have the frequency components of the original signal for masking to happen. A masked signal can be heard even though it is weaker than the masker. Masking happens when a signal and a masker are played together?for instance, when one person whispers while another person shouts?and the listener doesn't hear the weaker signal as it has been masked by the louder masker. Masking can also happen to a signal before a masker starts or after a masker stops. For example, a single sudden loud clap sound can make sounds inaudible that immediately precede or follow. The effects of

backward masking

is weaker than forward masking. The masking effect has been widely studied in psychoacoustical research. One can change the level of the masker and measure the threshold, then create a diagram of a psychophysical tuning curve that will reveal similar features. Masking effects are also used in lossy audio encoding, such as

MP3

.

Missing fundamental

[

edit

]

When presented with a

harmonic series

of frequencies in the relationship 2

f

, 3

f

, 4

f

, 5

f

, etc. (where

f

is a specific frequency), humans tend to perceive that the pitch is

f

. An audible example can be found on YouTube.

[13]

Software

[

edit

]

Perceptual audio coding uses psychoacoustics-based algorithms.

Perceptual audio coding uses psychoacoustics-based algorithms.

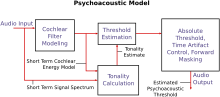

The psychoacoustic model provides for high quality

lossy signal compression

by describing which parts of a given digital audio signal can be removed (or aggressively compressed) safely?that is, without significant losses in the (consciously) perceived quality of the sound.

It can explain how a sharp clap of the hands might seem painfully loud in a quiet library but is hardly noticeable after a car backfires on a busy, urban street. This provides great benefit to the overall compression ratio, and psychoacoustic analysis routinely leads to compressed music files that are one-tenth to one-twelfth the size of high-quality masters, but with discernibly less proportional quality loss. Such compression is a feature of nearly all modern lossy audio compression formats. Some of these formats include

Dolby Digital

(AC-3),

MP3

,

Opus

,

Ogg Vorbis

,

AAC

,

WMA

,

MPEG-1 Layer II

(used for

digital audio broadcasting

in several countries), and

ATRAC

, the compression used in

MiniDisc

and some

Walkman

models.

Psychoacoustics is based heavily on

human anatomy

, especially the ear's limitations in perceiving sound as outlined previously. To summarize, these limitations are:

A compression algorithm can assign a lower priority to sounds outside the range of human hearing. By carefully shifting bits away from the unimportant components and toward the important ones, the algorithm ensures that the sounds a listener is most likely to perceive are most accurately represented.

Music

[

edit

]

Psychoacoustics includes topics and studies that are relevant to

music psychology

and

music therapy

. Theorists such as

Benjamin Boretz

consider some of the results of psychoacoustics to be meaningful only in a musical context.

[14]

Irv Teibel

's

Environments series

LPs (1969?79) are an early example of commercially available sounds released expressly for enhancing psychological abilities.

[15]

Applied psychoacoustics

[

edit

]

Psychoacoustic model

Psychoacoustic model

Psychoacoustics has long enjoyed a symbiotic relationship with

computer science

. Internet pioneers

J. C. R. Licklider

and

Bob Taylor

both completed graduate-level work in psychoacoustics, while

BBN Technologies

originally specialized in consulting on acoustics issues before it began building the first

packet-switched network

.

Licklider wrote a paper entitled "A duplex theory of pitch perception".

[16]

Psychoacoustics is applied within many fields of software development, where developers map proven and experimental mathematical patterns in digital signal processing. Many audio compression codecs such as

MP3

and

Opus

use a psychoacoustic model to increase compression ratios. The success of

conventional audio systems

for the reproduction of music in theatres and homes can be attributed to psychoacoustics

[17]

and psychoacoustic considerations gave rise to novel audio systems, such as psychoacoustic

sound field synthesis

.

[18]

Furthermore, scientists have experimented with limited success in creating new acoustic weapons, which emit frequencies that may impair, harm, or kill.

[19]

Psychoacoustics are also leveraged in

sonification

to make multiple independent data dimensions audible and easily interpretable.

[20]

This enables auditory guidance without the need for spatial audio and in

sonification

computer games

[21]

and other applications, such as

drone

flying and

image-guided surgery

.

[22]

It is also applied today within music, where musicians and artists continue to create new auditory experiences by masking unwanted frequencies of instruments, causing other frequencies to be enhanced. Yet another application is in the design of small or lower-quality loudspeakers, which can use the phenomenon of

missing fundamentals

to give the effect of bass notes at lower frequencies than the loudspeakers are physically able to produce (see references).

Automobile manufacturers engineer their engines and even doors to have a certain sound.

[23]

See also

[

edit

]

Related fields

[

edit

]

Psychoacoustic topics

[

edit

]

References

[

edit

]

Notes

[

edit

]

- ^

Ballou, G (2008).

Handbook for Sound Engineers

(Fourth ed.). Burlington: Focal Press. p. 43.

- ^

Christopher J. Plack (2005).

The Sense of Hearing

. Routledge.

ISBN

978-0-8058-4884-7

.

- ^

Lars Ahlzen; Clarence Song (2003).

The Sound Blaster Live! Book

. No Starch Press.

ISBN

978-1-886411-73-9

.

- ^

Rudolf F. Graf (1999).

Modern dictionary of electronics

. Newnes.

ISBN

978-0-7506-9866-5

.

- ^

Jack Katz; Robert F. Burkard & Larry Medwetsky (2002).

Handbook of Clinical Audiology

. Lippincott Williams & Wilkins.

ISBN

978-0-683-30765-8

.

- ^

a

b

Olson, Harry F.

(1967).

Music, Physics and Engineering

. Dover Publications. pp. 248?251.

ISBN

978-0-486-21769-7

.

- ^

Kuncher, Milind (August 2007).

"Audibility of temporal smearing and time misalignment of acoustic signals"

(PDF)

.

boson.physics.sc.edu

.

Archived

(PDF)

from the original on 14 July 2014.

- ^

Robjohns, Hugh (August 2016).

"MQA Time-domain Accuracy & Digital Audio Quality"

.

soundonsound.com

. Sound On Sound.

Archived

from the original on 10 March 2023.

- ^

Fastl, Hugo; Zwicker, Eberhard (2006).

Psychoacoustics: Facts and Models

. Springer. pp. 21?22.

ISBN

978-3-540-23159-2

.

- ^

Thompson, Daniel M. Understanding Audio: Getting the Most out of Your Project or Professional Recording Studio. Boston, MA: Berklee, 2005. Print.

- ^

Roads, Curtis. The Computer Music Tutorial. Cambridge, MA: MIT, 2007. Print.

- ^

Lewis, D.P. (2007): Owl ears and hearing. Owl Pages [Online]. Available:

http://www.owlpages.com/articles.php?section=Owl+Physiology&title=Hearing

[2011, April 5]

- ^

Acoustic, Musical (9 March 2015).

"Missing Fundamental"

.

YouTube

.

Archived

from the original on 2021-12-20

. Retrieved

19 August

2019

.

- ^

Sterne, Jonathan (2003).

The Audible Past: Cultural Origins of Sound Reproduction

. Durham: Duke University Press.

ISBN

9780822330134

.

- ^

Cummings, Jim.

"Irv Teibel died this week: Creator of 1970s "Environments" LPs"

.

Earth Ear

. Retrieved

18 November

2015

.

- ^

Licklider, J. C. R. (January 1951).

"A Duplex Theory of Pitch Perception"

(PDF)

.

The Journal of the Acoustical Society of America

.

23

(1): 147.

Bibcode

:

1951ASAJ...23..147L

.

doi

:

10.1121/1.1917296

.

Archived

(PDF)

from the original on 2016-09-02.

- ^

Ziemer, Tim (2020). "Conventional Stereophonic Sound".

Psychoacoustic Music Sound Field Synthesis

. Current Research in Systematic Musicology. Vol. 7. Cham: Springer. pp. 171?202.

doi

:

10.1007/978-3-030-23033-3_7

.

ISBN

978-3-030-23033-3

.

S2CID

201142606

.

- ^

Ziemer, Tim (2020).

Psychoacoustic Music Sound Field Synthesis

. Current Research in Systematic Musicology. Vol. 7. Cham: Springer.

doi

:

10.1007/978-3-030-23033-3

.

ISBN

978-3-030-23032-6

.

ISSN

2196-6974

.

S2CID

201136171

.

- ^

"Acoustic-Energy Research Hits Sour Note"

. Archived from

the original

on 2010-07-19

. Retrieved

2010-02-06

.

- ^

Ziemer, Tim; Schultheis, Holger; Black, David; Kikinis, Ron (2018). "Psychoacoustical Interactive Sonification for Short Range Navigation".

Acta Acustica United with Acustica

.

104

(6): 1075?1093.

doi

:

10.3813/AAA.919273

.

S2CID

125466508

.

- ^

CURAT.

"Games and Training for Minimally Invasive Surgery"

.

CURAT

. University of Bremen

. Retrieved

15 July

2020

.

- ^

Ziemer, Tim; Nuchprayoon, Nuttawut; Schultheis, Holger (2019). "Psychoacoustic Sonification as User Interface for Human-Machine Interaction".

International Journal of Informatics Society

.

12

(1).

arXiv

:

1912.08609

.

doi

:

10.13140/RG.2.2.14342.11848

.

- ^

Tarmy, James (5 August 2014).

"Mercedes Doors Have a Signature Sound: Here's How"

.

Bloomberg Business

. Retrieved

10 August

2020

.

Sources

[

edit

]

External links

[

edit

]